-

Right Place, Right Time,

Right Place, Right Time

Fortunate to be at the right place at the right time, I have been an active participant in (or at least had a front row seat for) some of the most dramatic transformations in computing. Many of these transformations have shown some similar attributes, notably the importance of people willing to question what is really valuable – especially those who are willing to dig for the hard data necessary to induce others to question their assumptions and adjust their biases.

Today's world is implemented in the current technology and terminology; nevertheless, an understanding of history can be useful to understand how we got where we are, and to point out some of the more promising ways forward.

Dawn of Personal Computing: What and Why?

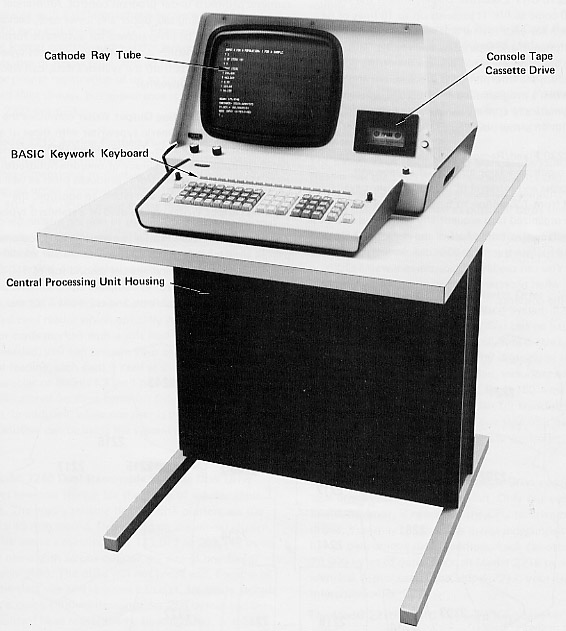

I got my first exposure to computing in the mid '70s when my middle school in one of Boston's tech suburbs was gifted with one of the earliest "personal computers". A storage closet was cleared out to become a "computer lab" with an full sized table dedicated to hold this 60 pound electronic beast and all of its various support units. The only display was a small monochrome CRT with at most 16 lines of text along with a modified typewriter attached that could be used to dump out hardcopy, and our unit's only "storage" was on cassette tapes. And yet, this system was clearly personal in nature – it booted in seconds into an interactive environment. Even if the display was small, the updates were immediate, and the system was quite effective.

The question for my school was what to do with this thing? Our math teacher got us all to go through the steps to print out geometric shapes and crude sinewaves, and many of us worked through other extensions of our "new math" curriculum. But for us kids, the real answer was obvious: games! Our system came with a few cassette tapes including some BASIC games, which were of course immediately very popular. My first taste of the power of programming was when I hacked together something that could overwrite text on the screen which quickly evolved into a tiny game with several animated "horses" (actually just character strings using marks such as an apostrophe for the head and slashes for the legs) running races across the screen – which promptly got banned during school hours because the game was distracting too many students during class times.

A few years later my upscale high-school was gifted a PDP-11 minicomputer, and the experience was entirely different – a stereotypical example of tech isolation. The high-school's system was set up in the basement, alone in a locked room, no access allowed without first earning a science teacher's approval. Only a very small number of students wanted to have anything to do with that system, and even though I already understood some coding and already had some idea of what that system could do I saw no benefit to spending my time on anything that isolated. The power of any tool is limited by its degree of separation from its point of leverage.

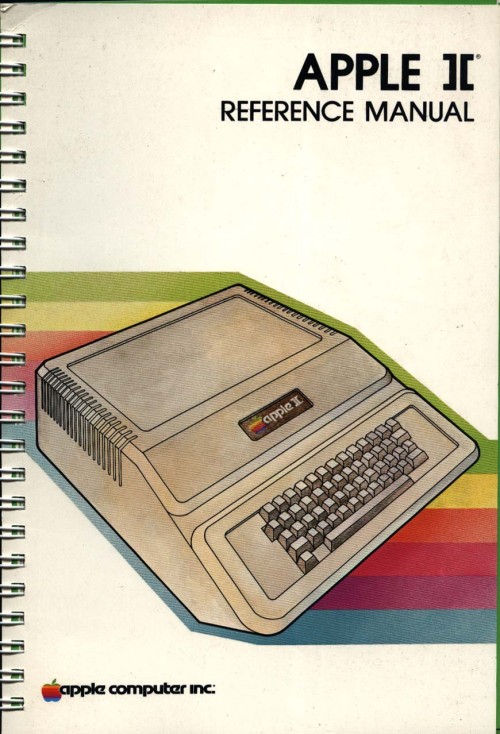

At the opposite end of the spectrum, in college my sophmore roommate had been loaned an Apple II by his father, and as I was the only night-owl in our apartment I managed to talk my way into using that computer after my roommate went to bed. Starting with RobotWar and eventually making heavy use of the amazingly detailed early Apple manuals, I was able to recover my early coding skills and even teach myself how to code in 6502 Assembly, eventually completing a variety of little visual games pushing the limits of that 16 color graphics system. All by borrowing time on an open system sitting on a corner desk in our apartment's common room.

My roommate's father had very high hopes for what these personal systems could do for small businesses like his, especially with tools like VisiCalc. Working with VisiCalc was a breakthrough moment for appreciating how these systems would change business computing. Even in its most rudimentary form, there was undeniable power with VisiCalc's flexible tables, and in those days entering one change and watching every value recalculate automatically and immediately was impressibly close to magic. Decades later, as I work with data visualizations, I still can hear this man's wish: "If you guys could just write a good charting program that could work with this stuff..."

At the time the circle of coders for these personal systems was rather small, and even in the relative backwaters of my university for nothing more than the cost of a few beers my friends and I got the chance to swap advice with Richard Garriott and hear stories about the challenges creating the early Ultima games. And even then, while it was clear that the little systems could change the way business worked, it was even more clear that there was an even larger audience beyond the business world that was eager for the ever more immersive experiences that these personal computers could offer.

Bridging between tech and literary worlds as an English Major spending inordinate amounts of time in the Computer Science department, I fell into an interesting opportunity (which later became the genesis of this website's name). Ray Montgomery, a local author and early coder, was at the leading edge of what has become interactive fiction. At that time he was working to squeeze his book-length text and some graphical mini-games to fit within the limits of early Atari consoles. Running up against deadlines, he hired me to help finish and test his project, and I found myself deep in an excellent introduction to practical computing. Key lessons came from supporting the decision processes for how to successfully determine what to keep and what to drop in order to satisfy the specific target audience. Computers can do lots of cool tricks, but in the end the only important tricks are those that are serving the overall experience – judicial editing is as important for every application on a computer as it is for any published text.

Simpler is Better, Early Unix and RISC

Halfway through my years in college, there was a serious rift that tore through parts of our engineering faculty. Several professors abruptly left the school, and eventually the remaining Computer Science department broke away from the main core of the computing resources on campus. The new CS department abruptly shifted their focus from mainframes running FORTRAN, COBOL, and Pascal over to a new curriculum focused on emerging paradigms such as Unix, RISC architecture, and open source software. This break was massively disruptive to the engineering college, but the resulting chaos provided me just the break I needed to enable a switch from my official status as just an English major taking some Computer Science classes and skip past a lot of waitlists and prerequisites to become not only a full-time CS student in the engineering college but also eventually a paid teaching assistant for some of the new higher level CS courses.

Before long, instead of just being yet another undergraduate programmer seeking an open terminal on the big timesharing systems, I had earned root login privileges and a key to a new machine-room filled with a variety of PDP-11s and VAXes and other systems all running BSD Unix. Chaos had lead to opportunity, including an offer of a scholarship to stay for a masters degree giving me hands-on, source-level experience across what proved to be a wide range of both single- and multi-user systems running several variants of BSD and System V Unix.

More interestingly, my work landed right in the middle of the RISC-vs-CISC arguments. While I had studied RISC architectures in school, when I graduated I was hired into Hewlett-Packard originally joining their core DBMS team and then the HP/UX kernel team before settling into what became a lead role in the system performance community. At HP I was actively involved in a series of David-takes-on-Goliath battles that were reshaping computing. The first battle that attracted me to HP was the RISC-vs-CISC design argument, and HP's PA-RISC proved to be every bit as interesting as I had hoped, especially the out-of-order execution capabilities which echoed a few of the DataFlow architecture ideas my thesis advisor had been championing.

But soon I found myself involved in the arena of competitive performance where the challenge was not just RISC versus CISC, but included taking on mainframe computers and proving that minicomputers could could be effective solutions in core business computing accounts. Here I was embedded right in the midst of the arguments between ambitious designs versus pragmatic delivery, and our business flourished because we did have a visionary architecture but importantly we also had the disciplined focus to deliver the features that our customers were willing to pay extra to have right now.

By the time the "dot com bubble" began to heat up, what had been a relatively small skunkworks was now a multi-billion dollar business, and I was managing a team of engineers working on web-server performance and analysis of new web technologies. Our base work was stressing systems and developing tests to highlight how our servers could scale faster than our customer's promised growth plans. Our bonus work came from projects assigned by the company executives to evaluate a wide variety of web technologies that were being debated in the boardroom, either pitched as potential acquisitions or tracked as expected competition – a fascinating stream of wild ideas and even wilder expectations. In a period where it seemed like everyone was being rewarded for attempting an even more outlandish claim than the one before, my team was prospering precisely because we had earned a solid reputation for honest work identifying flaws and providing well reasoned criticisms.

Efficiency Rules

Once the "dot com" bubble burst, the industry's attention shifted from hyping claims of 'biggest' and 'best' over to managing costs and improving efficiency. And here again I lucked into a front-row seat to the next big transformation, this time helping commodity servers (high volume, low cost, x86-based servers) showcase their value proposition versus the existing minicomputers. Where I had been proving standard Unix could overtake the proprietary systems, now now the challenge was showing that Linux and Microsoft's Windows Server were just as capable and a whole lot cheaper – matching the performance results was relatively easy, the harder task was stripping marketing claims down to bare facts and then reassembling a clearer picture of users' real costs. Once again, with tactical analysis and robust engineering, we were successful in proving the underdog could win, and again our marketplace transformed the industry.

By the time that commodity servers had taken the drama out of the competitive performance arena, I took a chance to leave HP and go to Intel analyzing and measuring an entirely different transformation, the mobility revolution. My transition to this space was with laptop PCs, part of the team working towards the vision of "all day computing" – figuring out the steps to move from heavy laptop PCs that maybe lasted a few hours between charges and instead deliver thin and light notebooks that could last all day on a single charge. The key was to put aside the grand statements from the many participants and instead establish clear and verifiable measurements of comparable battery life experience, and then working to track everyone's progress towards those overall goals.

Even as the market delivered this vision with new laptops only a third of the weight and yet still more than twice the battery life, I had already embarked on a new assignment: evaluating when, where, and how smartphones would threaten laptops. Starting alone, creating a new lab out of a basement storeroom, we built a team that would soon include a dozen people directly measuring all sorts of phone and tablet devices, working with dozens of partners across the country to generate some of the most hotly debated reports to be presented to the executive team. Our conclusions were controversial but we had done the work to make our measurements both robust and representative, so when the boardroom arguments erupted instead of the usual shoot-the-messenger reactions our data and our methods were used to drive change across the company.

The common theme was that very few customers ever care about technology for technology's sake. The vast majority of the marketplace just wants solutions that provide the desired experience with a minimum of cost and hassle. If there are alternatives that satisfy these needs more easily, then it will not take long before even the strongest products suffer from the changing tides. The key, as always, is having a good understanding of the strengths of one's products and at least as good understanding of what the market is willing to pay for.

Observations

Today, with the freedom to work on the projects that most intrigue me, I am playing in the deep waters of Data Science. And yet, even in these new areas of discovery, I find myself still watching again how over-hyped energy is quickly dissipated, and that real progress comes from the stable engineering of those who have a solid grip on what is really necessary to address the problems at hand.

One of the few constants through all of these transformations has been the value of those who are willing to provide clear and steadfast advice in spite of pressure to just ride the current trends. Management may not immediately appreciate the push-back, but executive decisions will only be as good as the information available at the time, and if key people are afraid to speak up then the important decisions will be made in an information vacuum.

To be successful in these situations takes a lot of preparation, and often a bit of good fortune, but all these opportunities start with a willingness to stand up and draw attention to some well founded data which reveals insights that we all do need to see.